How to Track Your Automated Testing Efforts with Test Automation Reporting? Pitfalls and imperative features of testing platforms.

You’ve invested quite a lot of resources into your test automation in order to accelerate repetitive, time-consuming manual processes. You’ve selected tools, defined the frameworks, set up the infrastructure, built automated scripts. Furthermore, in the future, you will have to spend time and money on maintaining them. Have you ever thought if your automated tests are worth this effort? Let’s talk about the smart visualization of automation test results — Test Automation Reporting, its bottlenecks. But how to deal with them, the metrics you should track tо ensure your test automation is bringing the value!

Table of Contents

Why Test Automation Reporting is essential

Test automation reporting is the main tool, indicator and informer about the state of the software and the possibility of delivering it to users. It is the final chord of the automated testing process. It is here that we understand that we have tested enough, and we can release the product. This activity is performed together with the Test Execution activity.

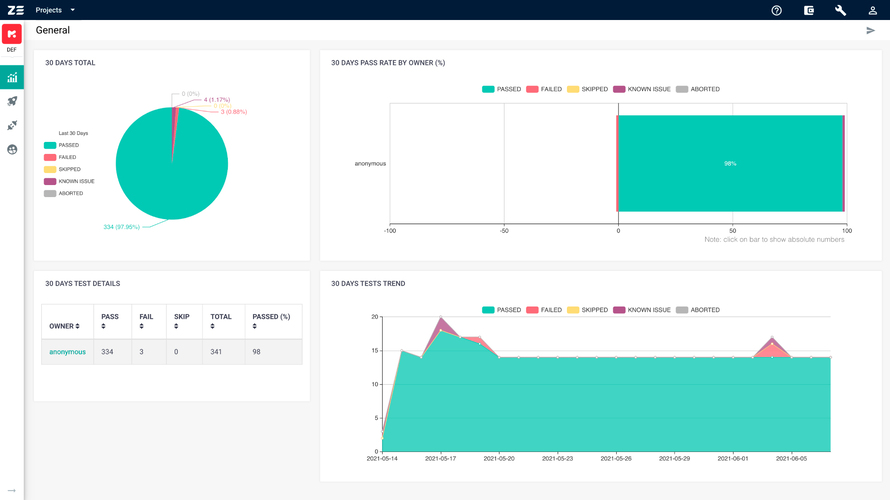

Reports allow you to gather all automation tests results and spot any issues as soon as they appear. Automation test report shows the scripts that were executed (total number); their duration time; statuses of test cases/suites. Whether they were failed, passed, skipped or aborted; failure reasons; execution history, etc. It helps the team to keep an eye on the status of the product, and quickly indicate any issues, so they can be fixed as soon as possible.

Who needs Test Automation Reporting

Automation test reporting serves the whole team. It gives the information about the quality of test automation and answers various questions. Did they meet the testing goals? Were they able to find problems early on? How many unnecessary operations were there? Did the automation test scripts work well? What are the ways to improve the quality of the product? Are we ready to release?

We can distinguish four groups of target audiences for whom deep analysis and timely transparent reporting are important:

-

QA engineers.

Using test automation reporting tools, for instance Zebrunner Testing Platform, Automation QA engineers are able to detect the failure reasons with AI/ML, rich artifacts (logs, screenshots and videos) and test history panel. Hence, manual QA engineers can achieve traceability between manual test cases and automated tests, and if necessary submit new bugs to Jira.

- QA management staff. Reports allow them to build specific KPIs for both the testing team and the product they test. Their focus is on timelines for releases, extracts from test results without too much technical detail, and general statistics (numerical and comparative metrics). They use various dashboard and widgets to track engineering performance (ROI, pass rate and personal statistics per each user, etc.).

-

C-Level/Business executives/Customers.

For them, foremost, the final result is important in the most concise and clear format (yes/no), visual presentation of the information (graphs, diagrams), expert opinion on the product quality and the possibility of releasing a product into an industrial environment without going into details. For them, it is also crucial to see not only the picture/state of the release at the moment, but also the historical quality of the software, since the demand from customers depends on it, and, accordingly, the company’s profit.

-

Developers.

They use automation test reporting to check test failures or to pre-analyze feature releases in order to reduce errors during staging and production. Test automation reports are essential for debugging. They allow developers to see the whole picture of the test coverage of their software, rather than specific features or parts. This minimizes dark/ test-uncovered software areas.

So, the competent automation test report leverages quick error fixing and enables easy collaboration over test results.

Metrics for tracking the success of Test Automation

As we have found out earlier, reporting is the construction of a readable report on the results of test execution. But what should it contain?

Reporting formats may differ, and depend on the stakeholders requirements, the project and its objectives, as well as the test automation reporting tools used. Regardless, its content should provide valuable information and fast feedback on the overall status of the product and allow teams to identify ways to improve the quality of the product. Everything should be described as simple as possible, but with sufficiently clear details in the right areas.

Firstly, the report should contain information about:

- Number of test runs

- For each test:

- its name

- test suite name

- result

- execution time

- error notification if the test failed

- Number of passed tests

- Number of failled tests

- Runtime of all tests

- Date and time

- Machine/Environment Name

Additional metrics for automated test reporting:

- Automation Test Coverage %

- Pass Rate %

- Automation Stability %

- Build Stability %

- Automation Progress%

- Automatable test cases %

- Automation Script Effectiveness

The report may also contain information useful for debugging:

- Full stack trace if test fails

- Test story and their results

- Built-in screenshots for each step and/or when the test falls

- Embedded logs and/or other attachments (any text and media files, including HTML dump pages)

- Video sessions

- Variable values at DDT

- Test environment variable values (application version, browser, etc.)

Besides, a good report should preferably contain dashboards with various visual information:

- Charts and graphs about test results

- Charts and graphs about test coverage

- And other visual summary information.

Challenges in Test Automation Reporting and Automated Testing

Modern development based on Agile, DevOps and CI/CD has changed the perception of what a good test report is. There are some problems that could arise on the way to achieving the best standard of the automation test report. Let’s take a look at them.

Short time-frame

As we’ve already mentioned, a test automation report is one of the final steps in the software development process. Previously, there were much fewer releases, so there was enough time to summarize the results, draw up a report and make a decision. According to modern approaches with fast releases, testing process and reporting must go hand in hand. The team must decide on the quality of the product and fix possible issues within short period of time (a week or even days). If the right information is not delivered at the right time to the right teams in the development pipeline, the release is delayed or comes with the dubious quality.

Noisy Voluminous data

The testing process generates a mountain of data: automation scripts, test results on different devices, in various browsers and versions. Lots of companies suffer from an excessive amount of testing data. All this prevents you from determining what is valuable and what is not worth considering. Noise is created by unreliable test cases, environmental instability, and other problems that cause false negative/positive results. Therefore, more time is needed to test the test results and understand the failure reasons.

Absence of a single place of truth

This issue is closely related to the previous one and is especially relevant for large organizations. It all comes down to a lot of testing data from multiple sources: numerous teams and people, various test tools and frameworks. In this case, the organization needs a single tool to collect, sort and properly use this data. Otherwise, there is an increased risk of missing a significant issue.

Test automation reporting and automated testing with Zebrunner Testing Platform

We have already discussed the value of a test automation report, main users for whom it is of interest. Alsothe metrics that a test report should contain, and the challenges that the QA team faces. In this section we will consider one more issue which has a direct impact on the success of the automation test reporting and automated testing. It is choosing the right tool.

Let’s come to the mandatory features that a decent tool for test automation reporting has:

AI/ML-based analysis.

With the help of this mechanism, you can save time on the analysis of automation tests at times as well as establish priorities of your daily activities. Machine learning automatically classifies the failures on the basis of stack traces. The certain label can be assigned by default (business, locator, infra issues, etc.).

Test failure analysis via rich artifacts in one place. Test automation analytics and reporting tool must collect test results for all the executed tests. So you can easily find the defect resolution via interactive video sessions, screenshots, published logs and a test history panel.

Ready-made and customized dashboards.

Different types of widgets help to illustrate the advanced metrics in the automation results, root cause analysis and engineering performance (pass-rate, defects count, failure by reasons, test coverage/automation growth, test execution ROI, etc.)

Collaboration & teamwork.

The tool represents all collected test results in the CI pipeline in one single dashboard. You are able to check the analytics of several projects and teams within one organization as well as collaborate with members by sharing test results via email or export of a report in HTML/with a sharable link.

Smooth integration with your existing workflow.

It is important to choose the test automation reporting tool that can be easily embedded into your automation process. The tool must be integrated with numerous third-party frameworks and tools (CI/CD tools, Cloud providers, Bug tracking, Test case management tools, Notification services and Team communication apps).

User Interface and Usability.

The last but not the least. User-friendly interfaces as well as the ability to quickly and simply learn the tool (tech support, tutorials, and training) also matter.

Conclusion on Automated Testing

Every company spends time and money creating its test automated testing system. It is especially critical for automated tests for distributed systems, multithreaded launches, a large number of test cases, for both large and small teams. So, it is crucial to have a full-featured tool for transparent deep analytics and reporting that can be easily built-in your delivery process. An efficient automation test reporting is a single place to store all your test results with smart dashboards, multiple widgets, unique metrics, rich analytic artifacts (logs, screenshots, videos), test history line and automatic detection of new crashes based on AI/ML (training with each new run).

Ananya Prisha is an enterprise level Agile coach working out of Hyderabad (India) and also founder of High Level PM Consultancy. Her goal has been to keep on learning and at the same time give back to the community that has given her so much.